Abstract

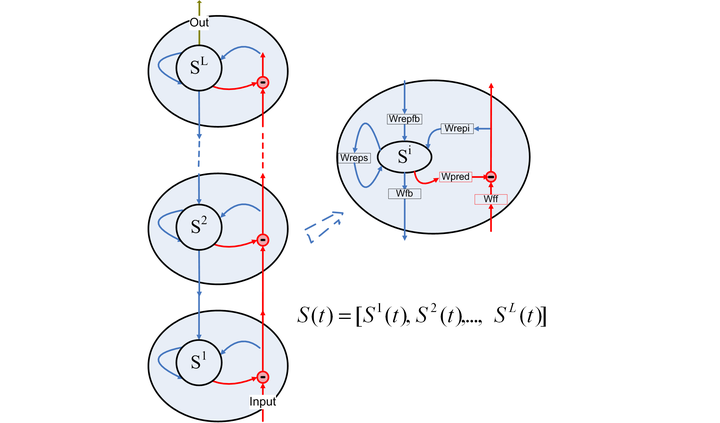

Predictive Coding Network (PCN) is an important neural network inspired by visual processing models in neuroscience. It combines the feedforward and feedback processing and has the architecture of recurrent neural networks (RNNs). This type of network is usually trained with backpropagation through time (BPTT). With infinite recurrent steps, PCN is a dynamic system. However, as one of the most important properties, stability is rarely studied in this type of network. Inspired by reservoir computing, we investigate the stability of hierarchical RNNs from the perspective of dynamic systems, and propose a sufficient condition for their echo state property (ESP). Our study shows the global stability is determined by stability of the local layers and the feedback between neighboring layers. Based on it, we further propose Weight Norm Supervision, a new algorithm that controls the stability of PCN dynamics by imposing different weight norm constraints on different parts of the network. We compare our approach with other training methods in terms of stability and prediction capability. The experiments show that our algorithm learns stable PCNs with a reliable prediction precision in the most effective and controllable way.